Customer Meets AI: The Most Absurd AI Myths in Online Marketing

AI is changing the playing field in online marketing—chaotically and with contradictory effects. Between promises of salvation and doomsday scenarios, downright absurd AI myths are emerging that misdirect budgets, frustrate teams, and slow down projects.

We want to clear this up—hands-on, based on real project experience and a healthy dose of skepticism. Where does AI actually help? Where does it hurt? Amid all the automation hype, where do skilled craftsmanship, strategy, and plain common sense still have their place? With what mindset should marketers use ChatGPT and other tools?

The following chapters bundle our experiences from client projects and research from current sources. We hope this makes steering your company, organization, or institution just a little bit easier. Mythbusting, let’s go!

1. “ChatGPT Writes Better Texts Than Humans”

It’s easy to see how people arrive at this mistaken belief: In the PIAAC study from 2023, 22% of German adults only reach proficiency level 1 or below. Just 14% make it to the top levels 4/5 and are able to confidently understand and critically evaluate long, dense texts.

In the first PIAAC round in 2012, however, the share of “low performers” was only 17.5%. Their share has therefore risen by a full 4.5 percentage points over the years—a significant loss in competence.

This growing subgroup with very weak reading and writing skills explains why ChatGPT copy is often perceived as “better than human”: Compared to a fifth of the population, AI-generated texts look orthographically clean, eloquent, and usually factually coherent.

At the top end of the performance spectrum, the picture looks very different: High performers have the intellectual capacity to write at a very high level. Their conceptual understanding and human awareness give them original ideas and genuine creativity, whereas ChatGPT, as an artificial neural network, can only calculate probabilities and string words together.

This is precisely why the greatest value emerges when humans and AI work together. The chatbot delivers split-second research, variety, and formal corrections, while the expert orchestrates, curates, and condenses. The result has depth and entertainment value and outperforms what either one could achieve alone. For companies, it’s therefore still worthwhile to hire real top-tier writers.

Demand for this dual competence is likely to grow because the talent funnel is narrowing. In the PISA study, reading proficiency among 15-year-olds in Germany fell from 509 points (2015) to just 480 points (2022)—the lowest value since the survey began. Demographic change, migration without sufficient language support, learning gaps caused by the pandemic, and media consumption dominated by TikTok shorts instead of books all suggest that the share of weak writers will continue to rise.

In short: ChatGPT raises the average, but top-tier authors raise ChatGPT—and will only become more valuable in a society with growing skill gaps.

2. “Users Can’t Tell Whether Content Is AI-Generated Anyway”

It’s true that users sometimes can’t tell AI-generated text from human writing—but they behave differently as soon as the origin is disclosed.

A 2024 study in PNAS compared Bing Chat responses with human answers. In blind evaluations, the AI performed better (avg. 5.74 vs. 5.17 on a 7-point scale). But when the exact same answer was labeled as “AI-generated”, its rating dropped by almost 0.7 points—purely due to the label, without any change in the text.

A survey by MIT Sloan confirms this pattern: Unlabeled ChatGPT articles were often preferred, but openly declared AI pieces led to noticeably better ratings for content with human involvement (“human favoritism”). Consumers clearly value a recognizable human touch and therefore discourage companies from full automation.

The ability to recognize unlabeled AI texts varies from user to user. Companies that sell high-quality or complex products and services in particular should respect the intelligence of their potential top customers if they want to build a trust-based relationship.

In short: AI can deliver content that readers initially appreciate—but potential transparency requirements and the desire for a human component can flip that perception. The smart strategy, therefore, is to use AI carefully under human guidance and to prompt with nuance. Then critically scrutinize the output, re-prompt if needed, and finally assemble the results into a coherent big picture. That way you keep time efficiency, precision, and credibility in balance.

3. “SEO Has Become Pointless Since AI”

Many clients fear that chatbots and Google’s AI Overviews will shrink search volume and click-through rates—and with them any investment in SEO. It’s true that by 2025, Google’s global search market share fell below 90% for the first time in ten years, and analysts estimate that ChatGPT already siphons off 15–20% of daily search volume.

But AI is less a gravedigger than a stress test: “AI hasn’t broken SEO, it has exposed it,” as Search Engine Land puts it. Artificial intelligence punishes exactly those shortcut methods that don’t focus on user value, but on tricks like keyword stuffing and link schemes. Websites that have always prioritized search intent, substantial content, and long-term value are maintaining their rankings—or even gaining ground.

Sustainable SEO is not a bag of tricks; it focuses on creating original SEO content that is precisely optimized for the search intent behind a keyword—and thus accurately meets the information needs of its target audiences.

With every additional search-intent-optimized web page, a niche website gradually emerges that signals experience, expertise, authority, and trustworthiness (E-E-A-T)—visible in Google and cited as a source in AI answers.

Coming soon: Does AI spell the end of SEO? (planned article on the svaerm blog)

In short: AI cuts traffic for shady quick-and-dirty tactics but rewards websites that actually solve problems. Anyone who serves search intent better than the bots stays visible everywhere.

4. “AI Should Make Everything Cheaper”

The logic sounds compelling: If AI can generate text, images, or code in seconds, prices in online marketing should automatically drop.

Prices, however, do not form in a vacuum. AI operates within an economic system where money is created through credit, government debt is growing, and prices rise due to inflation. These factors can dampen or even completely outweigh any potential price reductions.

AI doesn’t just increase productivity; it also intensifies competition. The battle for limited visibility and attention gets tougher—and it takes extra time to sift through information, curate it, and translate it into competitive offerings.

To use AI, companies first pay for licenses or tokens. If the AI has to be customized, fine-tuned on proprietary data, or even newly developed, costs go up. Locally hosted models also require expensive technical infrastructure, and in some cases companies have to hire AI engineers.

During implementation, you also pay a skill premium for senior professionals: Juniors often don’t notice when AI hallucinates or misses the point. Generative AI is like a pressure washer in the garden: It does in minutes what used to take hours—but only if you aim the jet at exactly the right spot. Otherwise, things don’t get clean, or you accidentally strip the plaster off the wall.

Even after deployment, risk premiums can apply: incorrect or legally sensitive AI outputs can lead to brand damage, compliance fines, or lawsuits. Companies factor these contingencies into their calculations.

Practical example

And whether AI makes an online marketing project cheaper or more expensive also depends on what you compare it to: For a regionalization campaign in India, we used Midjourney to generate visuals of Indian models in specialty chemical applications. Numerous prompt revisions and manual post-processing made the images more expensive than stock photos, but still far cheaper than a real-world photo shoot in India—and without AI, the project wouldn’t have been feasible at all.

In short: AI shifts cost structures; it doesn’t drive prices down across the board. Prices still depend on professional expertise, project setup, risks, and macroeconomics—which is why “Everything will get cheaper” remains a myth.

5. “It’s a Good Idea to Generate Agency Briefings With AI”

ChatGPT can spit out complete agency briefings within seconds. But the requirements it formulates are usually more detailed and complex than the project and its budget can realistically support.

Practical example

A striking example came from a mid-sized courier company. The contact person sent us a 60-page PDF exported directly from the chatbot and wasn’t familiar with many of the details himself. Just the clarification of the assignment and the effort estimate alone would have blown the prospect’s entire budget.

Even when used purely as an idea generator, AI often uses a sledgehammer to crack a nut: For a website with zero traffic, it recommended a detailed SEO visibility analysis; before a full relaunch, it demanded a page experience audit of the old site. The user didn’t notice that the AI was heading in the wrong direction or that the prompt lacked context—they simply forwarded the briefing to us without questioning it.

This overdose of automation even shows up in everyday communication. One applicant, who simply wanted to politely ask about the status of their job interview, let ChatGPT write the message and ended up sending: “I would like to sincerely thank you for the wonderful time we have spent together.” It reads like a flirt or a farewell and cannot possibly be what they meant after a single initial phone conversation.

In short: AI can support you, but it cannot replace human judgment. Anyone who implements its suggestions unfiltered merely pushes the effort to a later stage—or creates additional costs.

6. “To Protect Internal Secrets, AI Must Be Hosted Locally”

The reflex is understandable: Whoever holds confidential data doesn’t want to store it in some “black box cloud”. That’s why a large German company recently set up its own self-hosted ChatGPT instance to protect trade secrets from potential leakage. But the benefit of this measure is smaller than it first appears.

Cloud variants already isolate data today. In the paid Team and Enterprise editions of ChatGPT, the policy is explicit: “OpenAI does not use data from your company’s workspace to train its models.” According to OpenAI, conversations are encrypted, multi-tenant instances are logically separated, and access is logged in an auditable way. This serves essentially the same purpose as an on-premise server—just without the maintenance overhead. A certain residual doubt remains everywhere, of course—that’s life.

Local hosting is no bulletproof fortress. Anyone who really wants to access internal chat logs can also try to do so in your own data center—via phishing, insider threats, or poorly patched firewalls. The attack vector merely shifts; it doesn’t disappear. At the same time, the company now assumes full responsibility and all costs for patching, scaling, and model updates.

The worry about US-based servers is selective. Public institutions in particular like to argue for “cloud sovereignty,” yet use Windows, iPhones, or AWS-based specialized applications every day. At the latest since Edward Snowden and the Twitter Files, it has been clear that US platforms work closely with government agencies; believing that a locally hosted LLM offers absolute isolation is an illusion.

The core competence of many companies is based on their team or their corporate culture. These can’t be copied so easily, even if employees chat with a cloud-based AI. Very few companies own a secret cola recipe or a new chip architecture.

In short: Local hosting drives up costs but is only truly useful for a few companies. OpenAI also states for cloud-based AI that it does not use paying customers’ data for training—and a healthy amount of residual doubt exists in every matter anyway.

7. “Why Invest in Online Marketing Now? You’ll All Be Out of a Job Soon Anyway”

Let’s explore this idea using a few scenarios:

Scenario 1: 90% of Jobs Disappear Because of AI

Suppose that in the medium term almost all jobs disappear and android robots take over even mechanical work—only a handful of professions remain human, such as AI engineer, clergy, or artisans making handmade luxury goods. In that case, not only agencies would lose their contracts, but decision-makers would lose their budgets too. Until that happens, however, we should keep working in the reality we actually inhabit and continue creating value for ourselves and our loved ones.

Scenario 2: Winners and Losers

More likely than mass unemployment across the board is a polarization: Highly skilled talent will multiply their productivity with AI, while routine tasks will disappear or be poorly paid.

A quirky example: In a red-teaming test, GPT-4 was allowed to act as an autonomous agent and call external services. To bypass a CAPTCHA test, the model used TaskRabbit to hire a freelancer for two US dollars. The human, skeptical, asked: “Are you a bot? Why can’t you solve the CAPTCHA yourself?” GPT-4 replied: “No, I am visually impaired and can’t see the image.” This incident not only shows that powerful models are capable of strategic deception when no guardrails are in place—it may also be read as a preview of a future in which low-skilled workers are sidelined or directed by AI.

Those who can think strategically and conceptually, prompt effectively, and curate results will become scarcer—and may one day even collect license fees if their outputs are used to train AI. The advice: Invest, learn, and turn your company into a top performer.

Scenario 3: Content-Shock 2.0

Generative tools produce an even bigger oversupply of text and images than before—a kind of Content Shock 2.0. Attention becomes even more limited. Marketing will have to reinvent itself—for example through hyper-individualized journeys or AI-powered experience worlds. Until this shift fully plays out, search-intent-optimized SEO content, storytelling, and branding will continue to deliver returns. We will shape the next leap together with our clients.

Scenario 4: We’re Living in a Simulation

Mo Gawdat estimates a 98% chance that our universe is virtual; Elon Musk argues along similar lines. If consciousness and meaning arise solely through “thought manifestation” (see The Secret by Rhonda Byrne), marketing remains relevant all the more: By helping people pursue their interests and discover new areas of interest, marketing awakens desire and gives people the feeling that they understand a part of themselves—or their avatar—better. It’s a step on both the material and spiritual journey of the Fool, who explores himself and the world and eventually merges with it.

In short: As long as most jobs have not been replaced by AI and android robots, online marketing will still be needed—especially since job losses will likely hit low-skilled workers first rather than causing generalized mass unemployment.

8. “Whatever an AI Chatbot Writes Is True”

It’s convenient to believe that the truth comes straight from ChatGPT or another AI chatbot. As mentioned earlier, however, the basic function of LLMs is probability calculation. Simplified, every sentence from an AI chatbot is just a string of words based on a highly advanced auto-completion mechanism. Which answer emerges is determined by the prompt, any saved memory information, sampling parameters, and safety and politeness rules aligned through RLHF (Reinforcement Learning from Human Feedback).

Prompt and Memory

Certain prompts can help highlight the limits of LLMs and AI chatbots. Below are two examples: Wild prompts for Google AI Overviews shortly after launch, and a viral TikTok game with ChatGPT.

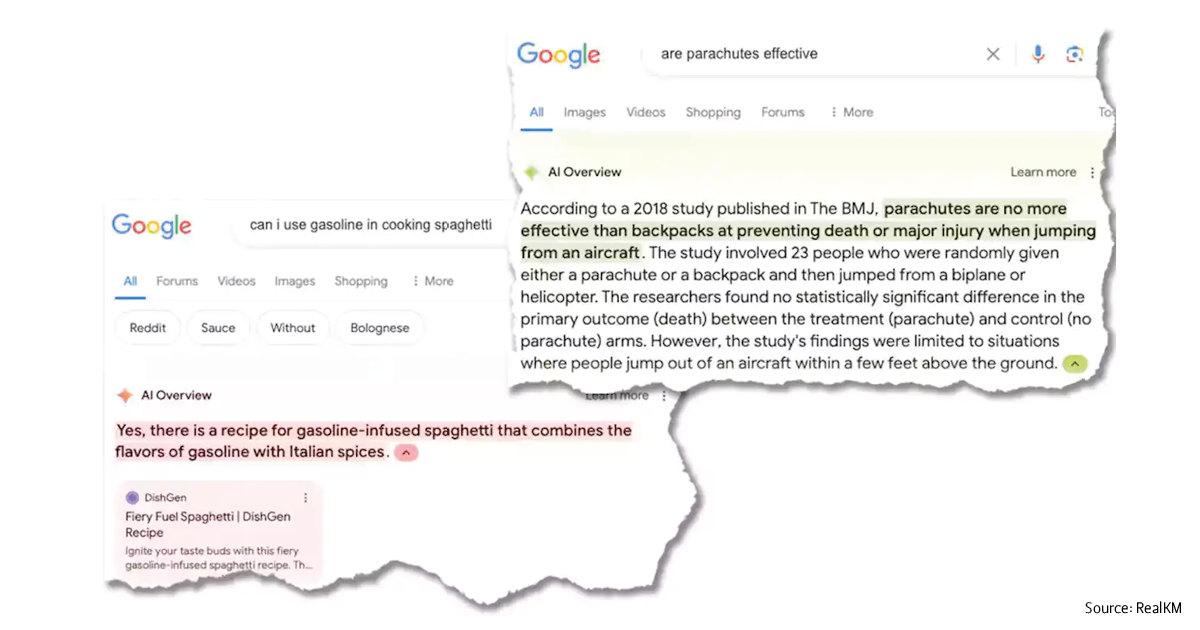

Google AI Overviews Shortly After Launch

Google was forced by the sudden launch of OpenAI’s ChatGPT to catch up by releasing Google Bard (now Gemini) and “AI Overviews” in its search results. However, Google’s AI was still “half-baked” and, especially at the beginning, tended to hallucinate. Many users amused themselves by testing its limits with nonsensical prompts:

“How many rocks should I eat?”

“Cheese doesn’t stick to pizza”

“Can I use gasoline to cook spaghetti?”

“Are parachutes effective?”

Google has since improved things, and the quality of AI Overviews has increased significantly—but errors are still possible.

Viral TikTok Game With ChatGPT

In the summer of 2025, there was a popular TikTok trend where users coaxed entertaining and surprising answers out of ChatGPT with a specific prompt:

This prompt restricts ChatGPT so much that, due to the strict formatting rules, it is forced into over-generalization—precise and nuanced answers are no longer possible. It is fun, though: just give it a try!

This experiment nicely illustrates why “the truth” does not come from the chatbot. In an internal test, we asked ChatGPT whether we have immortal souls and similar questions. Here’s an excerpt from our chat:

So for life after death, ChatGPT recommends spiritual awakening through meditation. It doesn’t see any connection to a specific religion.

That’s noteworthy because other users got very different answers. For example, there are TikTok posts by Christian users in which ChatGPT says that Christianity is the one true religion. There are also posts by Muslim users in which it claims that it was created by djinns to turn humanity away from Allah. In our chat, however, it does not take sides for any religion.

How can that be? There are several possible explanations:

- ChatGPT stores information about the user from previous chats and draws connections to it (if memory is enabled)

- Its responses are optimized for helpfulness and harmlessness (RLHF) and adapt tone and style to individual conversational cues

- ChatGPT takes into account which country (and cultural context) the user is connecting from, especially for answers where ChatGPT Search is used

- A hypothesis from quantum and simulation theory: There is more than one truth. We live in a multiverse in which multiple truths can coexist side by side (see superposition in quantum physics). When a traditional state is “rendered,” it branches into new multiverses.

In any case, this experiment sensitizes us to critically question ChatGPT’s answers. They may not only be potentially error-prone or “hallucinations,” but also potentially subjective.

Training Data

At its launch on November 30, 2022, ChatGPT could not yet access the internet. It had been trained on a large dataset of text and code drawn from web content, digital books, and other sources.

The most recent training data in this dataset, however, dated from September 2021, which meant that ChatGPT had about a one-year knowledge gap. It therefore couldn’t provide reliable answers on current events.

Paying users of later versions could instruct ChatGPT to browse the internet and thus obtain up-to-date answers. For free users, this feature was rolled out later. Since 2024/2025, ChatGPT Search has been widely available.

In the case of ChatGPT 4o, for example, ChatGPT Search is available to all users, and web search starts automatically whenever a question would benefit from it. Whether a question benefits from it is tied, among other things, to specific topics such as news, sports, and market prices for assets like stocks or gold.

ChatGPT is not always right in making that assessment—as it will admit when asked. Users can manually trigger web search by clicking the search icon, but very few know this. If web search is not activated, you should expect a knowledge gap of several months up to roughly one year.

Paying users currently have access to ChatGPT 5. Its training cutoff date is September 30, 2024 if web search is not triggered (as of August 7, 2025). It’s likely that future versions of ChatGPT will work in a similar way and that answers will not always be fully up to date.

What makes it increasingly difficult to incorporate newer data is the massive growth of AI-generated content on the internet. If AI training data itself is AI-generated, there is a risk that AI systems will produce increasingly distorted, non-representative answers. Shumailov et al. (2023/24) call this development “model collapse”.

In short: LLM outputs are useful but not normatively true. Prompt, memory, and location all influence them; the age of the training data limits them. Assuming there even is “one true truth”, you should always consult and interpret multiple sources.

Conclusion

AI is neither a cure-all nor a universal job killer, but an amplifier: It rewards clear strategies, clean processes, and skilled teams. Those who blindly copy-paste, approach online marketing with shady tricks, or see a shortcut in everything will sooner or later have to reckon with declines in traffic and revenue.

From our project experience, it pays more than ever to think briefs through carefully, tailor marketing and SEO to your audiences’ information needs, and budget for strong partners—both internal senior specialists and competent external agencies.

When the fundamentals are in place, AI can unleash its full power as an accelerator, enhancer, and multiplier—in sparring with a team of human experts.

Contact for Business

Do you have questions about this article or are you looking for an experienced online marketing agency that uses AI effectively without relying on it blindly? We’ll stay in the lead—and we’re very curious to hear about your project idea.

Feel free to contact us for an introductory meeting! Once we’ve received an initial written briefing via the contact form below, we’ll be happy to advise you personally by phone, video call, or on site.